Key Takeaways: The Cost of Contamination

-

01.

B2B marketplaces are saturated with AI workslop. Deliverables that look perfect at first glance contain deep logical failures, causing downstream security and product defects.

-

02.

Remote managers are currently subsidizing this failure. Employers are bleeding 4.5 hours a week performing clean up operations for "finished" freelance work.

-

03.

You cannot solve algorithmic problems with human review. TaskVerified solves this architecturally. Our Robot PM actively forces all submitted work through a 99.98% accurate Contamination Intelligence Protocol gate to block slop before it hits your dashboard.

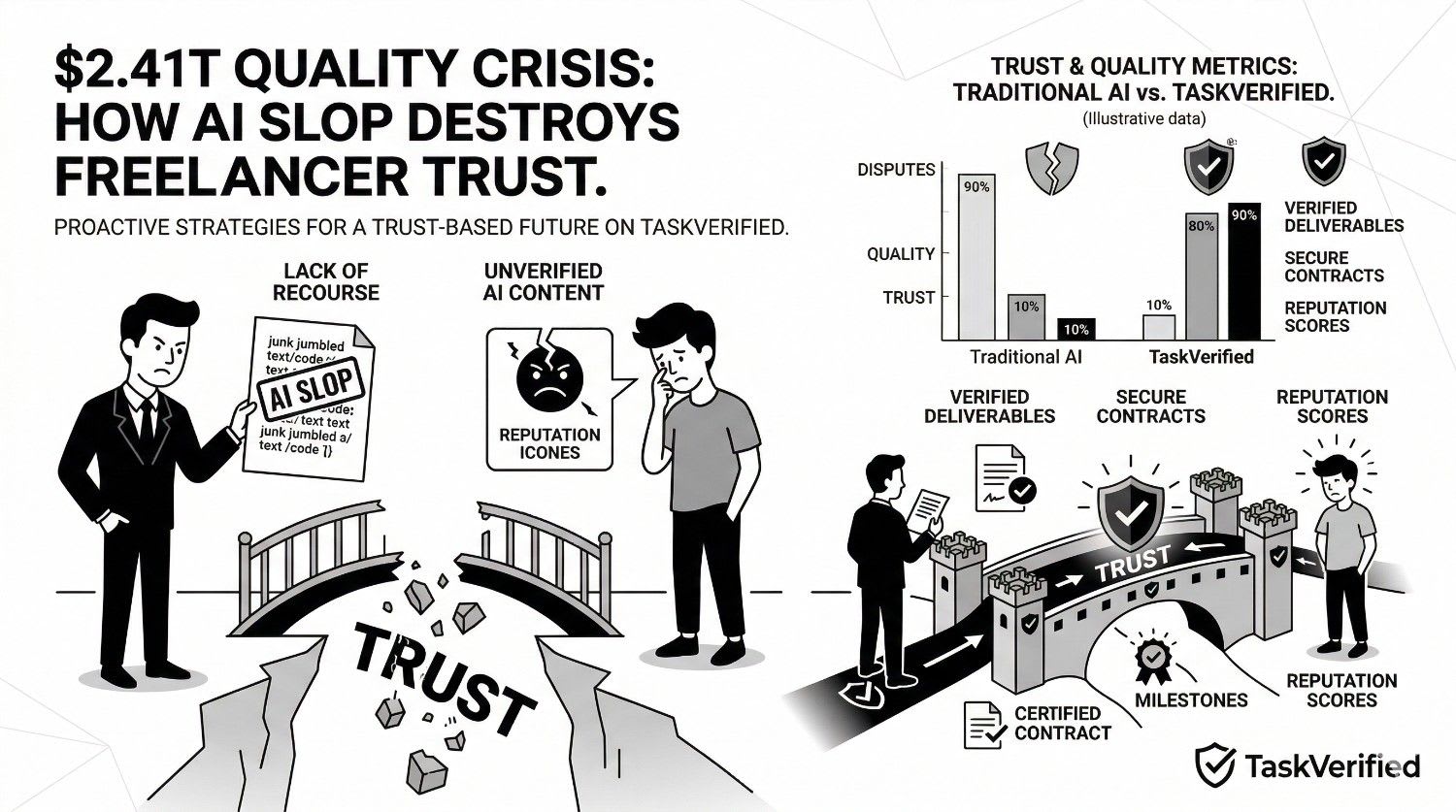

The remote workforce has entered a profound verification crisis. Legacy freelancer marketplaces operate on an archaic assumption that the work being delivered is fundamentally human in origin. In 2026, that assumption is a mathematical liability. The explosion of generative AI has flooded the B2B ecosystem with deliverables that are visually pristine but structurally vacuous. This contamination is quietly destroying enterprise productivity on a massive scale.

When you pay premium freelance rates to receive generative noise, you are not outsourcing work. You are outsourcing the rudimentary drafting phase and paying the human premium to do so. This is economically unviable. To quantify the depth of this issue, TaskVerified initiated a definitive internal benchmark. We audited sweeping public digital deliverables and combined the findings with eight critical pieces of external industry research. What we found completely delegitimizes the current manual review model.

The Zapier Data: The Invisible 4.5 Hour Drain

Measuring the exact cost of administrative clean up.

The most pressing issue regarding artificial intelligence in the workplace is not that it completes work poorly, but that its completion looks falsely competent. When a remote worker submits an AI generated module or report, it typically passes immediate visual inspection. The grammar is flawless. The formatting is impeccable. The variable names are clean.

The collapse only happens during operational execution. A January 2026 Zapier survey of 1,100 enterprise users exposed the staggering administrative cost of this deception. The research revealed that the average employee now spends 4.5 hours per week cleaning up, revising, and completely recreating external workslop.

This is not casual proofreading. This is a 4.5 hour structural refactoring session forced upon senior managers. When you quantify this at an average managerial compensation of $50 per hour, a company is bleeding $225 explicitly every week per employee just to sanitize deliverables that were supposedly already "completed". Furthermore, 74 percent of Zapier respondents confirmed that this low quality contamination resulted in severe downstream failures like direct customer complaints, critical security incidents, and reputational damage.

HBR Analysis: The Macro Productivity Destruction

How workslop alters the emotional trust state of a team.

The financial bleed is only the first symptom. The secondary symptom is the complete erosion of organizational velocity. As the Harvard Business Review bluntly summarized in late 2025, AI generated workslop is destroying productivity across the board. The issue is rampant among managers, editors, and operational leaders who outsource work, only to receive generative noise masquerading as human expertise.

When you lose trust in the source material, the entire review process must transform into an adversarial audit. Before generative AI, a senior developer reviewing a pull request from a trusted contractor assumed the contractor had written and logically tested the code. A grammatical error in documentation was just a typo. Today, an irregularity is interpreted as a systemic hallucination. Project managers are forced to operate under the assumption that the contractor possesses absolutely no comprehension of the deliverable they just submitted. This paranoia acts as a massive friction layer on modern agile development.

Remote Labor Index: Automation vs. Execution

The 96 percent rejection failure of pure AI processing.

A common misconception amongst amateur project managers is that AI slop is "good enough" for standard operational tasks. If it looks correct, why not bypass freelancers entirely and let AI do the work natively? The data decisively crushes this thesis. Real world project execution involves deep contextual decision logic, edge case handling, and cross system awareness that AI simply does not possess.

240 Active Projects Assessed

Spanning software, content, and deep analysis.

23 Distinct Professional Categories

Cross functional evaluation matrix.

$144,000 Total Value Evaluated

In real market capital equivalent.

96%

The AI models completely failed to meet professional business standards in 96 percent of the tests. Deliverables suffered from corrupted codebase structures, ignored scoping parameters, and fundamentally incomplete client requirements. Only 2.5 percent of tasks could be safely mapped into an automated architecture.

The mathematical verdict is unavoidable. Purely automated workflows generate catastrophic failure rates at the execution layer. The fundamental disconnect lies between "generative sourcing" (gathering ideas rapidly) and "true execution" (delivering a final, compilable code base). Human expertise is mandatory to bridge that gap. This explains exactly why people management must urgently pivot toward proactive architectural validation rather than retroactive human intervention.

Originality.ai: The Plague of Synthetic B2B Data

Analyzing the total saturation of the public review space.

The contamination is not isolated to complex engineering tasks. It has corrupted basic commercial data inputs. A foundational 2025 case study conducted by the detection firm Originality.ai audited 187,000 G2 Software Reviews. Their forensic data engine determined that over 26 percent of B2B software evaluations are currently generated entirely by AI models.

If highly visible, publicly verified, and trusted B2B evaluation platforms are heavily saturated with 26 percent synthetic data, the situation facing private freelance deliverables is catastrophic. These deliverables exist in the dark. Without a structured validation threshold, the unaudited deliverables passing through legacy marketplaces do not stand a chance against large scale automation abuse. Plagiarism is no longer just copying another human; it is the indiscriminate injection of hallucinated facts into your corporate IP.

NBC News: Double-Paying for Single Milestones

The bizarre emergence of the AI clean up crew.

If you pay premium tier B2B rates for generative output, you are directly participating in a broken economic equation. The most terrifying trend emerging in the remote gig economy is a secondary workforce hired strictly to fix the output of the first workforce.

"NBC News reports that agencies and corporations are increasingly hiring human freelancers to serve exclusively as clean up crews for AI slop."

Instead of executing original logic or creating novel assets, these "human polishers" are assigned roles to stabilize disjointed vibe coded applications and humanize artificially rigid documentation drafts. Consider the raw financial inefficiency. The employer hires Freelancer A for $1,000. Freelancer A utilizes undetected AI to generate an immediate return. The system looks functional but crashes under load testing. The employer is forced to hire Freelancer B for $500 to aggressively audit and fix Freelancer A's work. The company just paid 50 percent more for a delayed, intrinsically flawed architecture.

The Fiverr Reality: A 641 Percent Market Shift

When the core competency pivots to fixing robotic output.

The legacy platforms are proudly confirming this operational decay, mistaking a systemic bug for a modern feature. Raw market data, widely tracked by industry analysts and observed within Fiverr's own trend ecosystem, demonstrated an astonishing benchmark.

Fiverr experienced a staggering 641 percent growth sequence in specific search queries for professionals offering to "humanize AI content" or restructure AI generated application logic. It effectively validates what the RLI proved. The baseline standard for fundamental gig deliverables has completely deteriorated. When the fastest growing service on a marketplace is repairing fundamentally broken work sourced from that exact same marketplace, the foundational delivery model is broken.

PeoplePerHour: The Downstream Rejection Cycle

The administrative burden of disputing slop.

The promise of the remote gig revolution was the reduction of mid level managerial friction. You provide a scope, and you instantly acquire an independent expert who delivers the outcome. As detailed in recent industry dissections involving platforms like PeoplePerHour, this dynamic has inverted entirely.

AI slop is actively creating new layers of tedious administrative friction. Currently, employers are forced into absurd cycles. They must receive the unverified work, manually upload it into separate third party detection tools, identify the hallucinated boundaries, construct a compelling case proving the freelancer used automation, open a formal mediation ticket, and engage in high friction arguments over chat windows to claw back their escaped capital. The entire cycle violates the core principles of lean operations.

The Solution: TaskVerified and the Contamination Intelligence Protocol

Architectural prevention using the Robot Product Manager.

You cannot solve deep structural software and process issues relying on subjective human review. You solve mathematical volume problems with algorithmic architecture. This is exactly the genesis of TaskVerified. We reject the premise that managers should manually filter out the marketplace noise. We utilize a proprietary architectural layer known as the Robot PM.

System Validation Protocol Config

TaskVerified shifts the responsibility from the human manager to the machine protocol. The Robot PM sits relentlessly between the freelancer and the employer as an unbypassable quality validation point. The crown jewel of this framework is the native Contamination Intelligence Protocol. Operating as the primary gatekeeper, the protocol deploys a formidable 99.98 percent accuracy threshold against contamination logic by isolating semantic patterns indicative of machine generation.

| Validation Criteria | Legacy Upwork / Fiverr Flow | TaskVerified Architecture |

|---|---|---|

| Contamination Tracking | Non-existent. You are entirely forced to deploy expensive third party tools retroactively. | Zero-trust preemptive scanning. Driven by the 99.98% accurate Contamination Intelligence Protocol. |

| Rejection Response | Endless chat disputes, emotional mediation, and multi-week project stalling. | Instant algorithmic hard stops. The upload route is severed cleanly and autonomously. |

| Technical Guardrails | Blind reliance on hopeful compliance and basic PDF reading accuracy. | 120+ forensic-grade quality gates encompassing Technical Syntax, AI Contamination Markers, and Legal Compliance. Explore Library. |

When you deploy a global engineering or creative team, you cannot afford to audit thousands of lines of syntax or documents manually. Trust operates best when it is mathematically supported by undeniable evidence. By adopting task validation at the structural ingestion layer, we instantly recover the 4.5 baseline hours typically destroyed by the review process.

Instantly Block Commercial AI Slop

The remote ecosystem is buckling under artificial saturation. The legacy assumption of unverified trust is obsolete. TaskVerified physically eliminates procedural contamination, guaranteeing that every milestone cleared through our platform represents pure, validated human intent. Protect your operational budget from the rework cycle today.